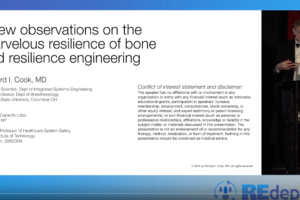

The Career, Accomplishments, and Impact of Richard I. Cook: A Life in Many Acts

Multiple professional and research communities feel a profound loss at the death of Richard I. Cook. Richard died peacefully at home on August 31, 2022 in the loving care of his wife Karen and his family. Dr. Richard Cook was a polymath who excelled in multiple careers, usually simultaneously. A physician and anesthesiologist, he was […]

The Career, Accomplishments, and Impact of Richard I. Cook: A Life in Many Acts Read More »