Multiple professional and research communities feel a profound loss at the death of Richard I. Cook. Richard died peacefully at home on August 31, 2022 in the loving care of his wife Karen and his family.

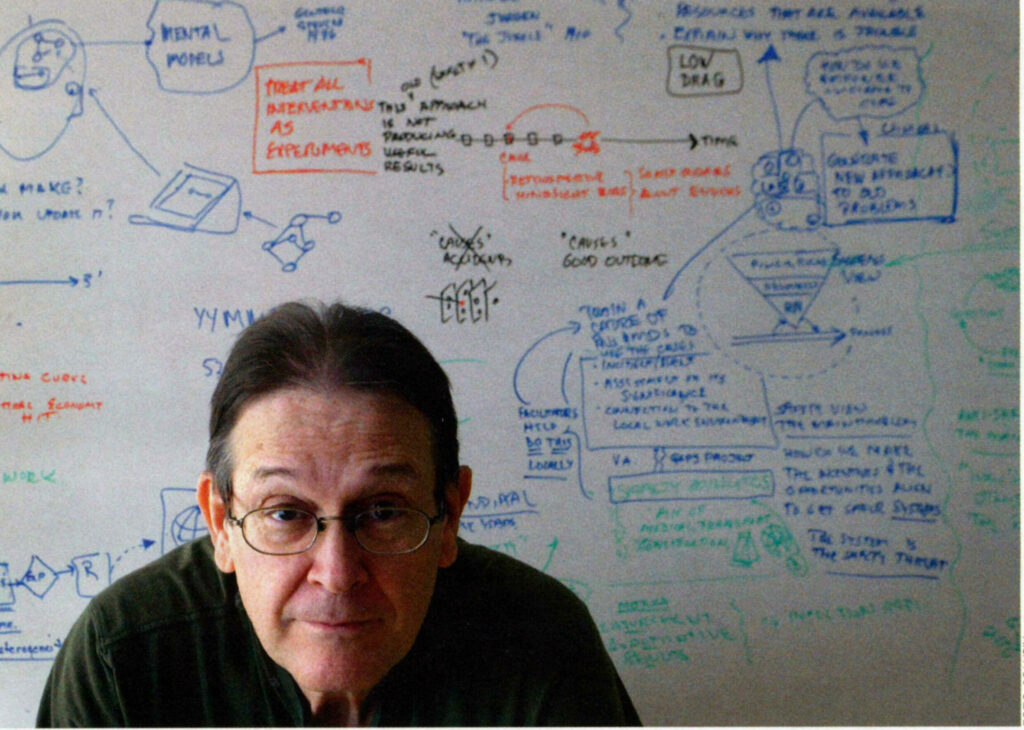

Dr. Richard Cook was a polymath who excelled in multiple careers, usually simultaneously. A physician and anesthesiologist, he was committed to providing personal, safe, and superb care to his patients. Richard was a software engineer at the beginning and then again at end of his career, and he has been an important leader in several research fields. His work on How Complex Systems Fail is his most succinct and possibly most influential paper (though there are multiple candidates for this honor). His work in safety is based on Learning from Incidents and Accidents. He developed and taught techniques on this theme across multiple high-performance and high risk domains. He used these techniques to study expertise, adaptive skills, and resilience of sharp-end practitioners in multiple fields and he learned how their resilient performance defended systems from failures. During this work, he discovered that automation was often clumsy, created new burdens often at just the wrong times, poorly fit to the actual constraints of real — not imagined — cognitive work, or being used for purposes never envisioned in its design. He was able to discover these fundamentals because he carefully observed the technical work at the sharp end of systems, focusing on situations where disruptions increased the tempo and criticality of operations. The results from these studies led Richard to innovate new designs for technology to support actual cognitive work at the sharp end of systems. He brought these novel and mold-breaking perspectives to practitioner communities, in particular in the last phase of his career to those who design, build, and operate the critical digital services that much of our society relies on.

Richard was an omnivorously curious student who graduated Cum Laude from Lawrence University in Appleton, Wisconsin in 1975 (in a one-of-a-kind program he created, including both physics and urban planning and more).

After graduation, he became a software engineer at Control Data Corp. (doing some finite element analysis — with punch cards!). In 1980 he decided to go to medical school, in part, because computers promised to support clinical work someday in the future, receiving an MD from the University of Cincinnati in 1986.

After graduation, he became a software engineer at Control Data Corp. (doing some finite element analysis — with punch cards!). In 1980 he decided to go to medical school, in part, because computers promised to support clinical work someday in the future, receiving an MD from the University of Cincinnati in 1986.

He joined the Department of Anesthesiology at the Ohio State University in 1987 as a researcher investigating human performance and physician-computer interaction during incidents in the operating room. In 1988 he began a cooperation with David Woods’ team, who had just moved to the Department of Industrial and Systems Engineering (ISE) at the Ohio State University. The partnership led to funding from the Anesthesia Patient Safety Foundation to study what is expertise in anesthesia and how this expertise contributes to patient safety. Working with Woods and graduate students in the Cognitive Systems Engineering Laboratory (ISE program) allowed Richard to expand his work on technology, complexity, and risk to non-medical fields such as aviation, air traffic control, space operations, and semiconductor manufacturing. This synergy helped produce the first edition of Behind Human Error in 1994 (2nd edition 2010). During this time of intense research, he also completed his Anesthesiology residency at Ohio State (1994) and took a position as Assistant Professor in the Department of Anesthesia & Critical Care at the University of Chicago. He founded the Cognitive technologies Laboratory (CtL) there in 1996, obtaining over $2 million in competitive external research funding over the next 15 years (quite the accomplishment for that time in the history of patient safety). He was promoted to Associate Professor in 2002.

His work on the human contribution to anesthesia and patient safety put Richard at the intersection of Human Cognitive Factors, human-computer/automation interaction, and health care. This was a rare combination of expertise. As a result, Richard played a key role in the start and expansion of the patient safety movement from 1995 into the mid-2000s in the US and internationally. He worked tirelessly during this period to bring together the different stakeholders and educate medical leaders, clinical professionals, and leaders of government agencies about the science and practice of Human Factors, Cognitive Systems Engineering, and Safety in Complex Systems (Richard’s influential contributions are captured in two books with diverse perspectives on the patient safety movement: one by Lucian Leape, Making Health Care Safe (2021), and the other by Robert Wears and Kathleen Sutcliffe, Still Not Safe, (2019).

In one notable event in 1992, he and his partners had been studying the impact of new technology in the operating room when he received a call that “something interesting” was going on in the OR. The team arrived to find a computerized infusion device was delivering clinically significant medication when it was apparently turned off (now into a garbage can instead of into a patient after quick action by the anesthesia team that day). It was another case of “fail-active” misbehavior of automation. Over the next 48 hours, Richard used his eclectic skills, including ones from his ham radio days, to build an apparatus to reveal the actual inner workings of the otherwise opaque interface and automated device. The results from the detective work this enabled revealed many design problems — ways the poor design of human-computer/automated systems creates new risks (patterns highlighted in Behind Human Error in 1994 using results from aviation, space, and medicine).

Richard helped start up the National Patient Safety Foundation in 1996 and was one of the founding board members, serving on the Executive Committee. As part of the NPSF activities, Richard produced a monograph in 1998, A Tale of Two Stories: Contrasting Views of Patient Safety which anticipated later developments in systems safety in general. From 1998-2000 Richard advised the head of the US Veterans Health Administration (VA) on how to start patient safety initiatives across the VA. In 2000, Richard published the landmark paper Gaps in the continuity of care and progress on patient safety in the British Medical Journal. This led to the creation of the “Gaps Center” in 2000 — a VA-funded patient safety campaign developed and carried out in conjunction with the Ohio VA, and Richard was appointed as co-director. His work at this time anticipated the rise of the new field of Resilience Engineering. Richard used non-traditional methods as well to promote learning about how safety is created and lost. One of these was the highly-condensed synthesis of key findings about “How Complex Systems Fail” originally from 1998. This four-page synoptic piece has become massively influential in many fields (initially it was squeezed into only two pages of card stock for easy reference since the internet & Google were in their infancy).

Richard helped start up the National Patient Safety Foundation in 1996 and was one of the founding board members, serving on the Executive Committee. As part of the NPSF activities, Richard produced a monograph in 1998, A Tale of Two Stories: Contrasting Views of Patient Safety which anticipated later developments in systems safety in general. From 1998-2000 Richard advised the head of the US Veterans Health Administration (VA) on how to start patient safety initiatives across the VA. In 2000, Richard published the landmark paper Gaps in the continuity of care and progress on patient safety in the British Medical Journal. This led to the creation of the “Gaps Center” in 2000 — a VA-funded patient safety campaign developed and carried out in conjunction with the Ohio VA, and Richard was appointed as co-director. His work at this time anticipated the rise of the new field of Resilience Engineering. Richard used non-traditional methods as well to promote learning about how safety is created and lost. One of these was the highly-condensed synthesis of key findings about “How Complex Systems Fail” originally from 1998. This four-page synoptic piece has become massively influential in many fields (initially it was squeezed into only two pages of card stock for easy reference since the internet & Google were in their infancy).

Another teaching innovation Richard created was a role-play simulation that starts 24 hours after a major aviation accident — The New Arctic Air Crash simulation. The simulation is an experiential learning tool that highlights how learning from accidents is often side-tracked and fails to discover critical systemic factors. It is based on the in-depth official investigation results of an aircraft accident in Canada. Richard orchestrated the production with participants from every clinical area and every level of management of the VA of Ohio. The participants took on first-hand roles in the effort to understand how the crash could have occurred. As in the real-world case, at first blush, it seems to be an obvious case of human error. As more information is revealed, it becomes clear this was in fact an organizational accident as a variety of pressures trapped the flight crew in a dilemma. The role play simulation was then, and still is, a powerful tool for creating a culture of safety by revealing in a visceral way the factors behind the label ‘human error’. Richard produced the simulation for several other groups over the following years.

One of the most important lessons Richard learned is that the introduction of technology and automation may mitigate or prevent some kinds of failures, but that technology also introduces new kinds of failure risks. The cases explored in the monograph “A Tale of Two Stories” highlighted this fact and illustrated how to build synergies between people and technology in a joint system carrying out difficult forms of cognitive work under a variety of pressures. Good design of joint cognitive systems of people and technology (an approach that began in 1982) depends on anticipating and mitigating the inevitable new risks. Richard’s research over the next few years continued to demonstrate this basic finding in studies of near misses and adverse events that involved the poor design of electronic medical records, computer order entry, bar code medication administration, and other new systems coming into practice (e.g., The Role of Automation in Complex System Failures, 2005). Richard’s research revealed what would come to pass: the adoption of poor designs would produce additional workload bottlenecks and risks that dominated promised benefits for clinical work.

The culmination came in 2011 when he served on an Institute for Medicine advisory panel that examined the connection between technology and safety in health care. Standing almost alone for the scientific record and against the common myths about the impact of new technology, Richard stood up for patients, despite the personal costs, by including a dissent to the panel’s weak position on the new safety risks arising from poor design of health IT and on the need for regulations to mitigate these safety risks.Everyone interested in safety and technology in health care should know his dissent in Appendix E of the report Health IT and Patient Safety: Building Safer Systems for Better Care. Because of Richard’s dissent, the IOM report is cited also as the basis for regulation to mitigate safety risks from IT in healthcare.

In 2004 Richard became entangled in another new development — the rise of Resilience Engineering as an alternative approach to safety & complexity that emphasized the adaptive power of people. In 2004 Richard joined a group of leading researchers on systems safety in Sweden to discuss David Woods’ and Erik Hollnagel’s proposal that engineering resilience into human-technology systems was both possible and necessary given the growing complexity and interdependence of human systems. This first meeting on Resilience Engineering led to the first book of the new field—Resilience Engineering: Concepts and Precepts in 2006 with three chapters co-authored by Richard. During this time, he published other key papers in the emerging field based on his extensive field research analyzing incidents and accidents in health care such as Going Solid, Minding the Gaps, and Being Bumpable. His work was a major contribution to showing how operators at the sharp end are an unappreciated & uniquely critical source for resilient performance.

He continued to be an influential figure for the next 15 years in the rapidly growing field. He has delivered many invited talks, generously helped many Ph.D. students around the world, advised many organizations on how to take advantage of the new results, and worked to turn the new ideas into pragmatic actions for working organizations. He also was engaged in debates on the foundational theory for Resilience Engineering. Recently, his keynote talk Bone is Resilience is a major contribution to the foundations that will reverberate forward.

After the massive Haiti earthquake of 2010, Richard participated in a University of Chicago-sponsored medical mission to provide care to the injured. His leadership efforts resulted in that site generating the greatest volume of care delivered to the casualties of that disaster. Almost all of the news video about the relief work was recorded at that field hospital.

In 2012, Richard paused his clinical practice to become Professor of Healthcare Systems Safety at the KTH Royal Institute of Technology in Stockholm, Sweden. He was quite excited on many fronts: the ability to work intensely training graduate students, the opportunity to widen his set of productive partnerships and develop new ones around the world, and the adventure of living in Europe. He also was hopeful that the health care system there would be more supportive of investigating and learning from near misses and adverse events and then putting the lessons into action and change. He became Emeritus Professor at KTH in 2015.

Around 2015 a different adventure beckoned — one that took him back to his roots in software engineering, back to the US, and back to Ohio State and the ISE department. John Allspaw was one of the creators of Continuous Integration/Continuous Development in software engineering (also called DevOps). This approach is fundamental to almost all critical software infrastructure in the private sector and provides the software services that all of us rely on today.

Around 2015 a different adventure beckoned — one that took him back to his roots in software engineering, back to the US, and back to Ohio State and the ISE department. John Allspaw was one of the creators of Continuous Integration/Continuous Development in software engineering (also called DevOps). This approach is fundamental to almost all critical software infrastructure in the private sector and provides the software services that all of us rely on today.

As CTO of Etsy and as an organizer of the Velocity conference (focused on software operations and performance), John realized that results from Richard and others in Resilience Engineering provided the potential for a substantial advance in software engineering practice. David Woods, planning with Allspaw and others leaders in DevOps invited Richard back to OSU to help start a new university-industry partnership to apply Resilience Engineering to software infrastructure called SNAFU Catchers. The new initiative allowed Richard to resume clinical practice part-time, take graduate students in a new direction, and work with top management of organizations that design and operate critical software infrastructure to deliver valued services.

The work of SNAFU Catchers centered on Learning from Incidents — a theme that echoes throughout Richard’s career. The STELLA Report from the SNAFU Catchers Workshop on Coping With Complexity is a non-traditional publication taking advantage of the affordances technology provides for widely sharing results helping the piece achieve a wide reach and influence — 50k page views and 20K unique people accessed the report. The ideas, techniques, results, and human network that grew from SNAFU Catchers have helped make Resilience Engineering a significant aspect of software engineering practices including new conferences (e.g., REdeploy), new tooling, and even new companies putting the advances into practice. As always, Richard produced a paper that captures the heart of the matter — Above the Line, Below the Line: The resilience of Internet-facing systems relies on what is above the line of representation (2020), not just for software infrastructure, but for all areas where people use technology to provide valued services safely.

The work of SNAFU Catchers centered on Learning from Incidents — a theme that echoes throughout Richard’s career. The STELLA Report from the SNAFU Catchers Workshop on Coping With Complexity is a non-traditional publication taking advantage of the affordances technology provides for widely sharing results helping the piece achieve a wide reach and influence — 50k page views and 20K unique people accessed the report. The ideas, techniques, results, and human network that grew from SNAFU Catchers have helped make Resilience Engineering a significant aspect of software engineering practices including new conferences (e.g., REdeploy), new tooling, and even new companies putting the advances into practice. As always, Richard produced a paper that captures the heart of the matter — Above the Line, Below the Line: The resilience of Internet-facing systems relies on what is above the line of representation (2020), not just for software infrastructure, but for all areas where people use technology to provide valued services safely.

Richard’s final shift was to start a successful company with John Allspaw (and with some help from David Woods his partner in many adventures over 33 years) — Adaptive Capacity Labs. Their goal was to help client software companies put into practice the scientific results, empirical findings, and workaday techniques so these organizations can enhance and sustain their sources of resilient performance. Some of their clients are well-known across the globe. Many others are only known in niche corners of the domain, but they provide the fundamental services which those “household names” critically depend on.

Overall, his body of work (Researchgate) consists of 41 peer-reviewed publications, 39 conference proceedings (these are important in Human Factors), 6 technical reports, 30 books/book chapters, with an h-index of 43 and almost 10K citations. His CV lists more than 225 invited lectures, which is certainly an under-count.

Richard Cook, which was he? Clinical Practitioner, Professor, Field Researcher, Human Factors specialist, Cognitive Systems Engineer, Designer of human-automation systems, Patient Safety Advocate, Change Agent, Teacher, Author, Innovator, Software Engineer, Pioneer of new fields such as Resilience Engineering. As a polymath, he was all of these, because by doing each, he learned more about all. Because he was committed to learning by doing, learning by detailed study of work as done, learning through interdisciplinary inquiry, and learning at the intersections, he was able to build unique expertise that broke traditional categories. This rare form of expertise mattered because he used it to create safety in health care and elsewhere, to lead R&D in unexplored directions, reject intellectual superficiality, and inspire a new generation of researchers, faculty, & designers.

Richard Cook, which was he? Clinical Practitioner, Professor, Field Researcher, Human Factors specialist, Cognitive Systems Engineer, Designer of human-automation systems, Patient Safety Advocate, Change Agent, Teacher, Author, Innovator, Software Engineer, Pioneer of new fields such as Resilience Engineering. As a polymath, he was all of these, because by doing each, he learned more about all. Because he was committed to learning by doing, learning by detailed study of work as done, learning through interdisciplinary inquiry, and learning at the intersections, he was able to build unique expertise that broke traditional categories. This rare form of expertise mattered because he used it to create safety in health care and elsewhere, to lead R&D in unexplored directions, reject intellectual superficiality, and inspire a new generation of researchers, faculty, & designers.

Richard’s driving curiosity and accomplishments span many disciplines and domains, and his work will continue to influence every one of them for decades to come. He has been described as a “prophet,” a “genius troublemaker,” and someone who “exemplifies intellectual punk rock.”

But above all else, he showed all those who have known and worked with him how to be kind and giving of time and attention when it comes to supporting others. His keen (and often hilarious) wit has helped many cope with struggles and challenges of all shapes and sizes.

His Colleagues,

David Woods, John Allspaw, Michael O’Connor