I am an angel investor in Jeli.io, and could not be more excited for the product to come out of “stealth” mode, for many reasons!

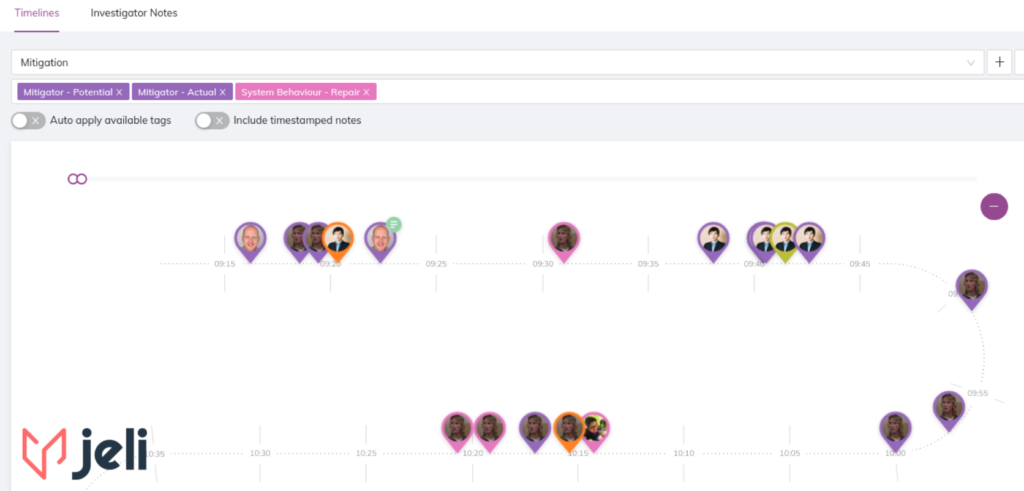

At its core, Jeli is built around the easy collection and annotation of this data, helping analysts make connections between chat transcripts, interviews, prior related incidents, and a whole host of other data sources. By supporting incident analysts in this way, they’ll be able to build a richer description of the event more efficiently than without it.

We’re optimistic about where the industry is heading with respect to learning from incidents. We believe that we are in the early stages of an important perspective shift in the industry with respect to effective incident analysis:

- what skills it requires,

- the rationale for building and continually supporting these skills, and

- the pragmatic value for companies who are doing this work well.

Learning from incidents effectively requires constructing the richest understanding of these complex events, from multiple perspectives, and representing an analysis in ways that can garner the broadest audience possible.

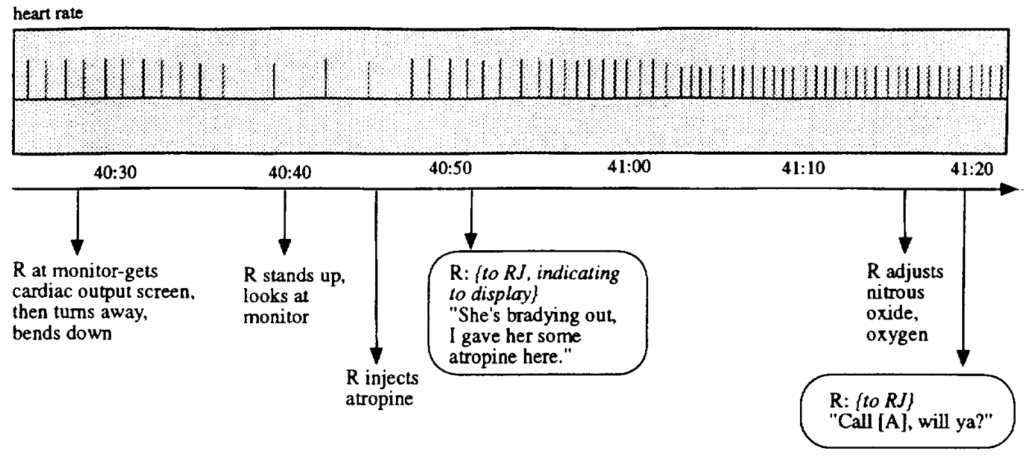

Effective analysis of an incident begins with collecting and collating qualitative data about the incident, which is typically found in multiple different sources. This data often includes:

- Data surrounding the handling of the incident. This commonly comes in the form of chat transcripts (e.g., Slack) or transcripts of audio/video bridge calls (e.g., Zoom).

- Data surrounding signals people receive (or reach for) as they try to understand what is actually happening (e.g., alerts, telemetry, metrics, exploration via queries, etc.)

- (…and many others)

This data helps analysts form what sort of shape the incident has, to help them choose some initial directions for their analysis. This data will also inform who they might consider interviewing for their perspectives on the event.

Note that these listed above represent behavioral data. In other words, it grounds what people actually did (or “said”) at the time. This in-situ data can then be used to help answer questions such as:

- What do people understand about how these systems work normally – not just how they break?

- What appears to have been difficult for people to do — or understand — during the response to the event?

- What specifically were people confident about in their understanding of what was happening, and what were hey uncertain about?

- What are people actually doing (by themselves, and with others) to understand how things are working when they’re uncertain?

- Was anything about the situation ambiguous? And if so, what?

- How do people know who to call on for help? What brings them to call those folks?

- What sort of authority do people have, and what authority do they believe they need from others in order to take certain actions? What are those actions?

- What surprises did people encounter?

- How do different teams coordinate their multiple — and often overlapping — activities with each other?

Those analyzing incidents need support in not only collecting this data, but in collating, triangulating, evaluating, and connecting it to other sources of data in order to explore what themes and narrative elements are significant for the analysis.

Qualitative data analysis tools have existed for a long time, used mostly by researchers in the social sciences. However, these tools typically aim to provide a broad and generalized toolbox for doing analysis in mostly academic and industry research contexts.

Jeli could be thought of as a focused kind of QDA tool, built specifically for software-related incident analysis.

This is why I invested in Jeli.io, and what makes us excited at Adaptive Capacity Labs about their work!